Scaling acceptance tests

This chapter is a follow-up to Intro to acceptance tests. You can find the finished code for this chapter on GitHub.

Acceptance tests are essential, and they directly impact your ability to confidently evolve your system over time, with a reasonable cost of change.

They're also a fantastic tool to help you work with legacy code. When faced with a poor codebase without any tests, please resist the temptation to start refactoring. Instead, write some acceptance tests to give you a safety net to freely change the system's internals without affecting its functional external behaviour. ATs need not be concerned with internal quality, so they're a great fit in these situations.

After reading this, you'll appreciate that acceptance tests are useful for verification and can also be used in the development process by helping us change our system more deliberately and methodically, reducing wasted effort.

Prerequisite material

The inspiration for this chapter is borne of many years of frustration with acceptance tests. Two videos I would recommend you watch are:

Dave Farley - How to write acceptance tests

"Growing Object Oriented Software" (GOOS) is such an important book for many software engineers, including myself. The approach it prescribes is the one I coach engineers I work with to follow.

GOOS - Nat Pryce & Steve Freeman

Finally, Riya Dattani and I spoke about this topic in the context of BDD in our talk, Acceptance tests, BDD and Go.

Recap

We're talking about "black-box" tests that verify your system behaves as expected from the outside, from a "business perspective". The tests do not have access to the innards of the system it tests; they're only concerned with what your system does rather than how.

Anatomy of bad acceptance tests

Over many years, I've worked for several companies and teams. Each of them recognised the need for acceptance tests; some way to test a system from a user's point of view and to verify it works how it's intended, but almost without exception, the cost of these tests became a real problem for the team.

Slow to run

Brittle

Flaky

Expensive to maintain, and seem to make changing the software harder than it ought to be

Can only run in a particular environment, causing slow and poor feedback loops

Let's say you intend to write an acceptance test around a website you're building. You decide to use a headless web browser (like Selenium) to simulate a user clicking buttons on your website to verify it does what it needs to do.

Over time, your website's markup has to change as new features are discovered, and engineers bike-shed over whether something should be an <article> or a <section> for the billionth time.

Even though your team are only making minor changes to the system, barely noticeable to the actual user, you find yourself wasting lots of time updating your ATs.

Tight-coupling

Think about what prompts acceptance tests to change:

An external behaviour change. If you want to change what the system does, changing the acceptance test suite seems reasonable, if not desirable.

An implementation detail change / refactoring. Ideally, this shouldn't prompt a change, or if it does, a minor one.

Too often, though, the latter is the reason acceptance tests have to change. To the point where engineers even become reluctant to change their system because of the perceived effort of updating tests!

These problems stem from not applying well-established and practised engineering habits written by the authors mentioned above. You can't write acceptance tests like unit tests; they require more thought and different practices.

Anatomy of good acceptance tests

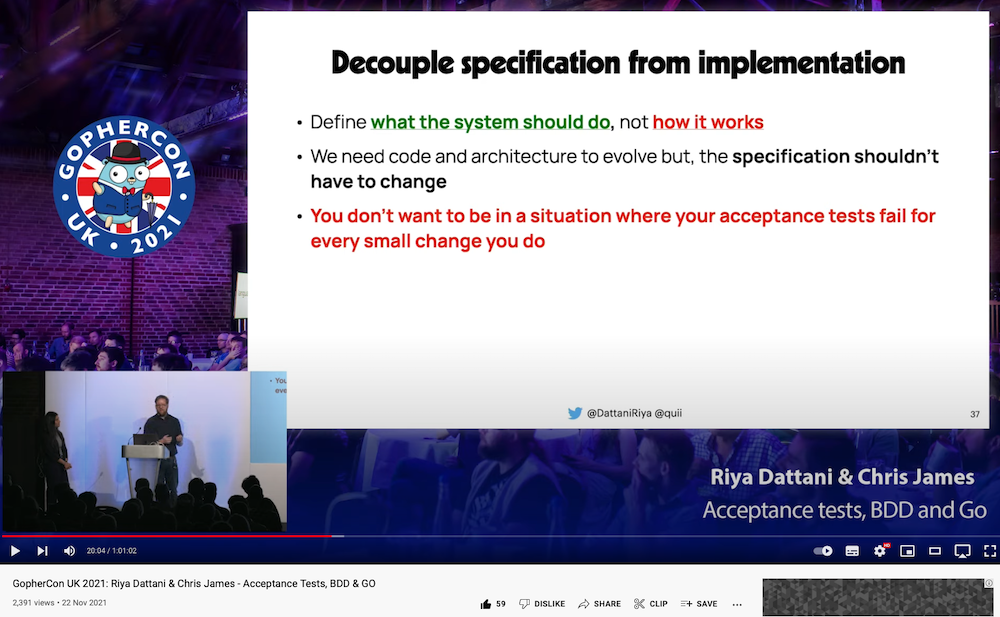

If we want acceptance tests that only change when we change behaviour and not implementation detail, it stands to reason that we need to separate those concerns.

On types of complexity

As software engineers, we have to deal with two kinds of complexity.

Accidental complexity is the complexity we have to deal with because we're working with computers, stuff like networks, disks, APIs, etc.

Essential complexity is sometimes referred to as "domain logic". It's the particular rules and truths within your domain.

For example, "if an account owner withdraws more money than is available, they are overdrawn". This statement says nothing about computers; this statement was true before computers were even used in banks!

Essential complexity should be expressible to a non-technical person, and it's valuable to have modelled it in our "domain" code, and in our acceptance tests.

Separation of concerns

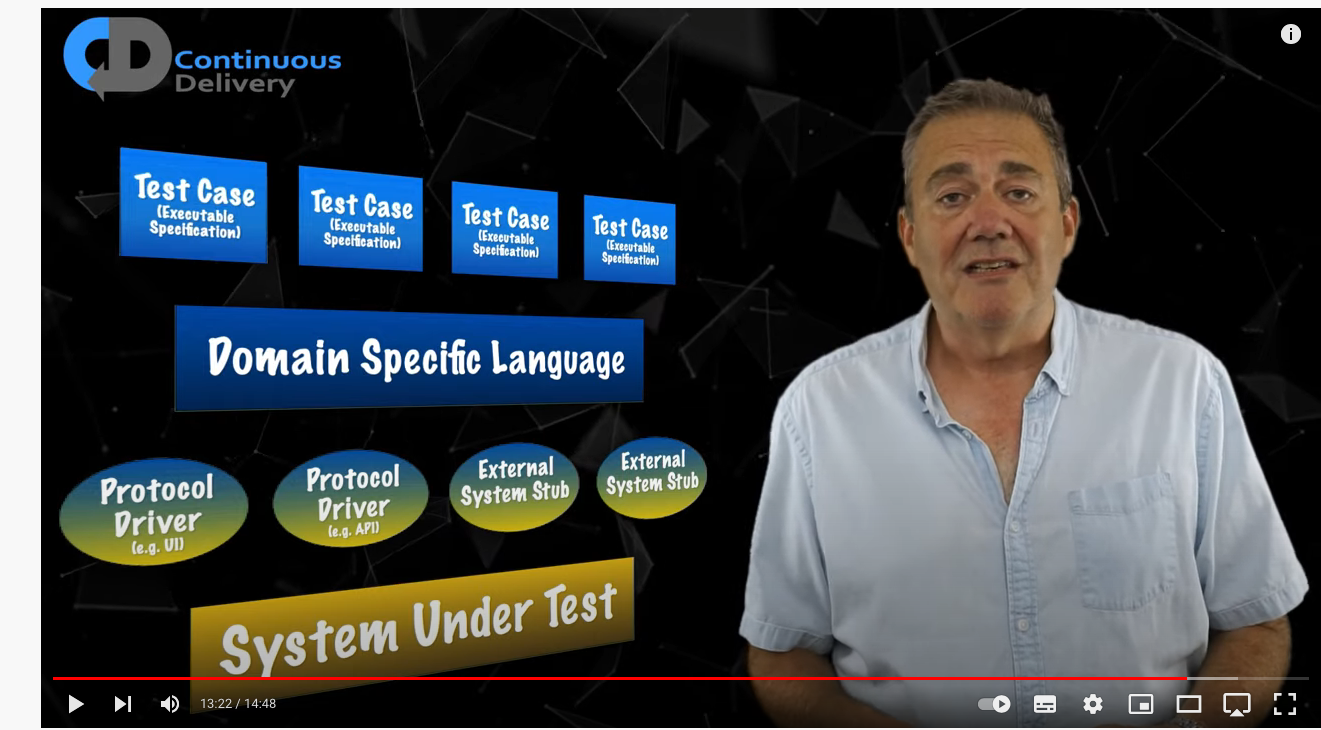

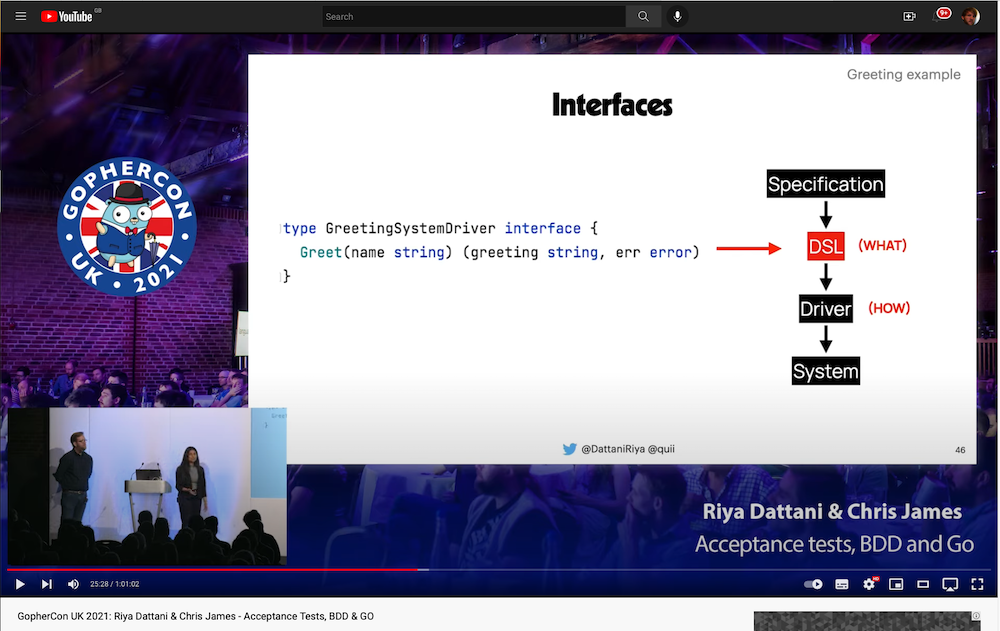

What Dave Farley proposed in the video earlier, and what Riya and I also discussed, is we should have the idea of specifications. Specifications describe the behaviour of the system we want without being coupled with accidental complexity or implementation detail.

This idea should feel reasonable to you. In production code, we frequently strive to separate concerns and decouple units of work. Would you not hesitate to introduce an interface to allow your HTTP handler to decouple it from non-HTTP concerns? Let's take this same line of thinking for our acceptance tests.

Dave Farley describes a specific structure.

At GopherconUK, Riya and I put this in Go terms.

Testing on steroids

Decoupling how the specification is executed allows us to reuse it in different scenarios. We can:

Make our drivers configurable

This means you can run your ATs locally, in your staging and (ideally) production environments.

Too many teams engineer their systems such that acceptance tests are impossible to run locally. This introduces an intolerably slow feedback loop. Wouldn't you rather be confident your ATs will pass before integrating your code? If the tests start breaking, is it acceptable that you'd be unable to reproduce the failure locally and instead, have to commit changes and cross your fingers that it'll pass 20 minutes later in a different environment?

Remember, just because your tests pass in staging doesn't mean your system will work. Dev/Prod parity is, at best, a white lie. I test in prod.

There are always differences between the environments that can affect the behaviour of your system. A CDN could have some cache headers incorrectly set; a downstream service you depend on may behave differently; a configuration value may be incorrect. But wouldn't it be nice if you could run your specifications in prod to catch these problems quickly?

Plug in different drivers to test other parts of your system

This flexibility allows us to test behaviours at different abstraction and architectural layers, which allows us to have more focused tests beyond black-box tests.

For instance, you may have a web page with an API behind it. Why not use the same specification to test both? You can use a headless web browser for the web page, and HTTP calls for the API.

Taking this idea further, ideally, we want the code to model essential complexity (as "domain" code) so we should also be able to use our specifications for unit tests. This will give swift feedback that the essential complexity in our system is modelled and behaves correctly.

Acceptance tests changing for the right reasons

With this approach, the only reason for your specifications to change is if the behaviour of the system changes, which is reasonable.

If your HTTP API has to change, you have one obvious place to update it, the driver.

If your markup changes, again, update the specific driver.

As your system grows, you'll find yourself reusing drivers for multiple tests, which again means if implementation detail changes, you only have to update one, usually obvious place.

When done right, this approach gives us flexibility in our implementation detail and stability in our specifications. Importantly, it provides a simple and obvious structure for managing change, which becomes essential as a system and its team grows.

Acceptance tests as a method for software development

In our talk, Riya and I discussed acceptance tests and their relation to BDD. We talked about how starting your work by trying to understand the problem you're trying to solve and expressing it as a specification helps focus your intent and is a great way to start your work.

I was first introduced to this way of working in GOOS. A while ago, I summarised the ideas on my blog. Here is an extract from my post Why TDD

TDD is focused on letting you design for the behaviour you precisely need, iteratively. When starting a new area, you must identify a key, necessary behaviour and aggressively cut scope.

Follow a "top-down" approach, starting with an acceptance test (AT) that exercises the behaviour from the outside. This will act as a north-star for your efforts. All you should be focused on is making that test pass. This test will likely be failing for a while whilst you develop enough code to make it pass.

Once your AT is set up, you can break into the TDD process to drive out enough units to make the AT pass. The trick is to not worry too much about design at this point; get enough code to make the AT pass because you're still learning and exploring the problem.

Taking this first step is often more extensive than you think, setting up web servers, routing, configuration, etc., which is why keeping the scope of the work small is essential. We want to make that first positive step on our blank canvas and have it backed by a passing AT so we can continue to iterate quickly and safely.

As you develop, listen to your tests, and they should give you signals to help you push your design in a better direction but, again, anchored to the behaviour rather than our imagination.

Typically, your first "unit" that does the hard work to make the AT pass will grow too big to be comfortable, even for this small amount of behaviour. This is when you can start thinking about how to break the problem down and introduce new collaborators.

This is where test doubles (e.g. fakes, mocks) are handy because most of the complexity that lives internally within software doesn't usually reside in implementation detail but "between" the units and how they interact.

The perils of bottom-up

This is a "top-down" approach rather than a "bottom-up". Bottom-up has its uses, but it carries an element of risk. By building "services" and code without it being integrated into your application quickly and without verifying a high-level test, you risk wasting lots of effort on unvalidated ideas.

This is a crucial property of the acceptance-test-driven approach, using tests to get real validation of our code.

Too many times, I've encountered engineers who have made a chunk of code, in isolation, bottom-up, they think is going to solve a job, but it:

Doesn't work how we want to

Does stuff we don't need

Doesn't integrate easily

Requires a ton of re-writing anyway

This is waste.

Enough talk, time to code

Unlike other chapters, you'll need Docker installed because we'll be running our applications in containers. It's assumed at this point in the book you're comfortable writing Go code, importing from different packages, etc.

Create a new project with go mod init github.com/quii/go-specs-greet (you can put whatever you like here but if you change the path you will need to change all internal imports to match)

Make a folder specifications to hold our specifications, and add a file greet.go

My IDE (Goland) takes care of the fuss of adding dependencies for me, but if you need to do it manually, you'd do

go get github.com/alecthomas/assert/v2

Given Farley's acceptance test design (Specification->DSL->Driver->System), we now have a decoupled specification from implementation. It doesn't know or care about how we Greet; it's just concerned with the essential complexity of our domain. Admittedly this complexity isn't much right now, but we'll expand upon the spec to add more functionality as we further iterate. It's always important to start small!

You could view the interface as our first step of a DSL; as the project grows, you may find the need to abstract differently, but for now, this is fine.

At this point, this level of ceremony to decouple our specification from implementation might make some people accuse us of "overly abstracting". I promise you that acceptance tests that are too coupled to implementation become a real burden on engineering teams. I am confident that most acceptance tests out in the wild are expensive to maintain due to this inappropriate coupling; rather than the reverse of being overly abstract.

We can use this specification to verify any "system" that can Greet.

First system: HTTP API

We require to provide a "greeter service" over HTTP. So we'll need to create:

A driver. In this case, one works with an HTTP system by using an HTTP client. This code will know how to work with our API. Drivers translate DSLs into system-specific calls; in our case, the driver will implement the interface specifications define.

An HTTP server with a greet API

A test, which is responsible for managing the life-cycle of spinning up the server and then plugging the driver into the specification to run it as a test

Write the test first

The initial process for creating a black-box test that compiles and runs your program, executes the test and then cleans everything up can be quite labour intensive. That's why it's preferable to do it at the start of your project with minimal functionality. I typically start all my projects with a "hello world" server implementation, with all of my tests set up and ready for me to build the actual functionality quickly.

The mental model of "specifications", "drivers", and "acceptance tests" can take a little time to get used to, so follow carefully. It can be helpful to "work backwards" by trying to call the specification first.

Create some structure to house the program we intend to ship.

mkdir -p cmd/httpserver

Inside the new folder, create a new file greeter_server_test.go, and add the following.

We wish to run our specification in a Go test. We already have access to a *testing.T, so that's the first argument, but what about the second?

specifications.Greeter is an interface, which we will implement with a Driver by changing the new TestGreeterServer code to the following:

It would be favourable for our Driver to be configurable to run it against different environments, including locally, so we have added a BaseURL field.

Try to run the test

We're still practising TDD here! It's a big first step we have to make; we need to make a few files and write maybe more code than we're typically used to, but when you're first starting, this is often the case. It's so important we try to remember the red step's rules.

Commit as many sins as necessary to get the test passing

Write the minimal amount of code for the test to run and check the failing test output

Hold your nose; remember, we can refactor when the test has passed. Here's the code for the driver in driver.go which we will place in the project root:

Notes:

You could argue that I should be writing tests to drive out the various

if err != nil, but in my experience, so long as you're not doing anything with theerr, tests that say "you return the error you get" are relatively low value.You shouldn't use the default HTTP client. Later we'll pass in an HTTP client to configure it with timeouts etc., but for now, we're just trying to get ourselves to a passing test.

In our

greeter_server_test.gowe called the Driver function fromgo_specs_greetpackage which we have now created, don't forget to addgithub.com/quii/go-specs-greetto its imports. Try and rerun the tests; they should now compile but not pass.

We have a Driver, but we have not started our application yet, so it cannot do an HTTP request. We need our acceptance test to coordinate building, running and finally killing our system for the test to run.

Running our application

It's common for teams to build Docker images of their systems to deploy, so for our test we'll do the same

To help us use Docker in our tests, we will use Testcontainers. Testcontainers gives us a programmatic way to build Docker images and manage container life-cycles.

go get github.com/testcontainers/testcontainers-go

Now you can edit cmd/httpserver/greeter_server_test.go to read as follows:

Try and run the test.

We need to create a Dockerfile for our program. Inside our httpserver folder, create a Dockerfile and add the following.

Don't worry too much about the details here; it can be refined and optimised, but for this example, it'll suffice. The advantage of our approach here is we can later improve our Dockerfile and have a test to prove it works as we intend it to. This is a real strength of having black-box tests!

Try and rerun the test; it should complain about not being able to build the image. Of course, that's because we haven't written a program to build yet!

For the test to fully execute, we'll need to create a program that listens on 8080, but that's all. Stick to the TDD discipline, don't write the production code that would make the test pass until we've verified the test fails as we'd expect.

Create a main.go inside our httpserver folder with the following

Try to run the test again, and it should fail with the following.

Write enough code to make it pass

Update the handler to behave how our specification wants it to

Refactor

Whilst this technically isn't a refactor, we shouldn't rely on the default HTTP client, so let's change our Driver, so we can supply one, which our test will give.

In our test in cmd/httpserver/greeter_server_test.go, update the creation of the driver to pass in a client.

It's good practice to keep main.go as simple as possible; it should only be concerned with piecing together the building blocks you make into an application.

Create a file in the project root called handler.go and move our code into there.

Update main.go to import and use the handler instead.

Reflect

The first step felt like an effort. We've made several go files to create and test an HTTP handler that returns a hard-coded string. This "iteration 0" ceremony and setup will serve us well for further iterations.

Changing functionality should be simple and controlled by driving it through the specification and dealing with whatever changes it forces us to make. Now the DockerFile and testcontainers are set up for our acceptance test; we shouldn't have to change these files unless the way we construct our application changes.

We'll see this with our following requirement, greet a particular person.

Write the test first

Edit our specification

To allow us to greet specific people, we need to change the interface to our system to accept a name parameter.

Try to run the test

The change in the specification has meant our driver needs to be updated.

Write the minimal amount of code for the test to run and check the failing test output

Update the driver so that it specifies a name query value in the request to ask for a particular name to be greeted.

The test should now run, and fail.

Write enough code to make it pass

Extract the name from the request and greet.

The test should now pass.

Refactor

In HTTP Handlers Revisited, we discussed how important it is for HTTP handlers should only be responsible for handling HTTP concerns; any "domain logic" should live outside of the handler. This allows us to develop domain logic in isolation from HTTP, making it simpler to test and understand.

Let's pull apart these concerns.

Update our handler in ./handler.go as follows:

Create new file ./greet.go:

A slight diversion in to the "adapter" design pattern

Now that we've separated our domain logic of greeting people into a separate function, we are now free to write unit tests for our greet function. This is undoubtedly a lot simpler than testing it through a specification that goes through a driver that hits a web server, to get a string!

Wouldn't it be nice if we could reuse our specification here too? After all, the specification's point is decoupled from implementation details. If the specification captures our essential complexity and our "domain" code is supposed to model it, we should be able to use them together.

Let's give it a go by creating ./greet_test.go as follows:

This would be nice, but it doesn't work

Our specification wants something that has a method Greet() not a function.

The compilation error is frustrating; we have a thing that we "know" is a Greeter, but it's not quite in the right shape for the compiler to let us use it. This is what the adapter pattern caters for.

In software engineering, the adapter pattern is a software design pattern (also known as wrapper, an alternative naming shared with the decorator pattern) that allows the interface of an existing class to be used as another interface.[1] It is often used to make existing classes work with others without modifying their source code.

A lot of fancy words for something relatively simple, which is often the case with design patterns, which is why people tend to roll their eyes at them. The value of design patterns is not specific implementations but a language to describe specific solutions to common problems engineers face. If you have a team that has a shared vocabulary, it reduces the friction in communication.

Add this code in ./specifications/adapters.go

We can now use our adapter in our test to plug our Greet function into the specification.

The adapter pattern is handy when you have a type that exhibits the behaviour that an interface wants, but isn't in the right shape.

Reflect

The behaviour change felt simple, right? OK, maybe it was simply due to the nature of the problem, but this method of work gives you discipline and a simple, repeatable way of changing your system from top to bottom:

Analyse your problem and identify a slight improvement to your system that pushes you in the right direction

Capture the new essential complexity in a specification

Follow the compilation errors until the AT runs

Update your implementation to make the system behave according to the specification

Refactor

After the pain of the first iteration, we didn't have to edit our acceptance test code because we have the separation of specifications, drivers and implementation. Changing our specification required us to update our driver and finally our implementation, but the boilerplate code around how to spin up the system as a container was unaffected.

Even with the overhead of building a docker image for our application and spinning up the container, the feedback loop for testing our entire application is very tight:

Now, imagine your CTO has now decided that gRPC is the future. She wants you to expose this same functionality over a gRPC server whilst maintaining the existing HTTP server.

This is an example of accidental complexity. Remember, accidental complexity is the complexity we have to deal with because we're working with computers, stuff like networks, disks, APIs, etc. The essential complexity has not changed, so we shouldn't have to change our specifications.

Many repository structures and design patterns are mainly dealing with separating types of complexity. For instance, "ports and adapters" ask that you separate your domain code from anything to do with accidental complexity; that code lives in an "adapters" folder.

Making the change easy

Sometimes, it makes sense to do some refactoring before making a change.

First make the change easy, then make the easy change

~Kent Beck

For that reason, let's move our http code - driver.go and handler.go - into a package called httpserver within an adapters folder and change their package names to httpserver.

You'll now need to import the root package into handler.go to refer to the Greet method...

import your httpserver adapter into main.go:

and update the import and reference to Driver in greeter_server_test.go:

Finally, it's helpful to gather our domain level code in to its own folder too. Don't be lazy and have a domain folder in your projects with hundreds of unrelated types and functions. Make an effort to think about your domain and group ideas that belong together, together. This will make your project easier to understand and will improve the quality of your imports.

Rather than seeing

Which is just a bit weird, instead favour

Create a domain folder to house all your domain code, and within it, an interactions folder. Depending on your tooling, you may have to update some imports and code.

Our project tree should now look like this:

Our domain code, essential complexity, lives at the root of our go module, and code that will allow us to use them in "the real world" are organised into adapters. The cmd folder is where we can compose these logical groupings into practical applications, which have black-box tests to verify it all works. Nice!

Finally, we can do a tiny bit of tidying up our acceptance test. If you consider the high-level steps of our acceptance test:

Build a docker image

Wait for it to be listening on some port

Create a driver that understands how to translate the DSL into system specific calls

Plug in the driver into the specification

... you'll realise we have the same requirements for an acceptance test for the gRPC server!

The adapters folder seems a good place as any, so inside a file called docker.go, encapsulate the first two steps in a function that we'll reuse next.

This gives us an opportunity to clean up our acceptance test a little

This should make writing the next test simpler.

Write the test first

This new functionality can be accomplished by creating a new adapter to interact with our domain code. For that reason we:

Shouldn't have to change the specification;

Should be able to reuse the specification;

Should be able to reuse the domain code.

Create a new folder grpcserver inside cmd to house our new program and the corresponding acceptance test. Inside cmd/grpc_server/greeter_server_test.go, add an acceptance test, which looks very similar to our HTTP server test, not by coincidence but by design.

The only differences are:

We use a different docker file, because we're building a different program

This means we'll need a new

Driver, that'll usegRPCto interact with our new program

Try to run the test

We haven't created a Driver yet, so it won't compile.

Write the minimal amount of code for the test to run and check the failing test output

Create a grpcserver folder inside adapters and inside it create driver.go

If you run again, it should now compile but not pass because we haven't created a Dockerfile and corresponding program to run.

Create a new Dockerfile inside cmd/grpcserver.

And a main.go

You should find now that the test fails because our server is not listening on the port. Now is the time to start building our client and server with gRPC.

Write enough code to make it pass

gRPC

If you're unfamiliar with gRPC, I'd start by looking at the gRPC website. Still, for this chapter, it's just another kind of adapter into our system, a way for other systems to call (remote procedure call) our excellent domain code.

The twist is you define a "service definition" using Protocol Buffers. You then generate server and client code from the definition. This not only works for Go but for most mainstream languages too. This means you can share a definition with other teams in your company who may not even write Go and can still do service-to-service communication smoothly.

If you haven't used gRPC before, you'll need to install a Protocol buffer compiler and some Go plugins. The gRPC website has clear instructions on how to do this.

Inside the same folder as our new driver, add a greet.proto file with the following

To understand this definition, you don't need to be an expert in Protocol Buffers. We define a service with a Greet method and then describe the incoming and outgoing message types.

Inside adapters/grpcserver run the following to generate the client and server code

If it worked, we would have some code generated for us to use. Let's start by using the generated client code inside our Driver.

Now that we have a client, we need to update our main.go to create a server. Remember, at this point; we're just trying to get our test to pass and not worrying about code quality.

To create our gRPC server, we have to implement the interface it generated for us

Our main function:

Listens on a port

Creates a

GreetServerthat implements the interface, and then registers it withgrpcServer.RegisterGreeterServer, along with agrpc.Server.Uses the server with the listener

It wouldn't be a massive extra effort to call our domain code inside greetServer.Greet rather than hard-coding fix-me in the message, but I'd like to run our acceptance test first to see if everything is working on a transport level and verify the failing test output.

Nice! We can see our driver is able to connect to our gRPC server in the test.

Now, call our domain code inside our GreetServer

Finally, it passes! We have an acceptance test that proves our gRPC greet server behaves how we'd like.

Refactor

We committed several sins to get the test passing, but now they're passing, we have the safety net to refactor.

Simplify main

As before, we don't want main to have too much code inside it. We can move our new GreetServer into adapters/grpcserver as that's where it should live. In terms of cohesion, if we change the service definition, we want the "blast-radius" of change to be confined to that area of our code.

Don't redial in our driver every time

We only have one test, but if we expand our specification (we will), it doesn't make sense for the Driver to redial for every RPC call.

Here we're showing how we can use sync.Once to ensure our Driver only attempts to create a connection to our server once.

Let's take a look at the current state of our project structure before moving on.

adaptershave cohesive units of functionality grouped togethercmdholds our applications and corresponding acceptance testsOur code is totally decoupled from any accidental complexity

Consolidating Dockerfile

DockerfileYou've probably noticed the two Dockerfiles are almost identical beyond the path to the binary we wish to build.

Dockerfiles can accept arguments to let us reuse them in different contexts, which sounds perfect. We can delete our 2 Dockerfiles and instead have one at the root of the project with the following

We'll have to update our StartDockerServer function to pass in the argument when we build the images

And finally, update our tests to pass in the image to build (do this for the other test and change grpcserver to httpserver).

Separating different kinds of tests

Acceptance tests are great in that they test the whole system works from a pure user-facing, behavioural POV, but they do have their downsides compared to unit tests:

Slower

Quality of feedback is often not as focused as a unit test

Doesn't help you with internal quality, or design

The Test Pyramid guides us on the kind of mix we want for our test suite, you should read Fowler's post for more detail, but the very simplistic summary for this post is "lots of unit tests and a few acceptance tests".

For that reason, as a project grows you often may be in situations where the acceptance tests can take a few minutes to run. To offer a friendly developer experience for people checking out your project, you can enable developers to run the different kinds of tests separately.

It's preferable that running go test ./... should be runnable with no further set up from an engineer, beyond say a few key dependencies such as the Go compiler (obviously) and perhaps Docker.

Go provides a mechanism for engineers to run only "short" tests with the short flag

go test -short ./...

We can add to our acceptance tests to see if the user wants to run our acceptance tests by inspecting the value of the flag

I made a Makefile to show this usage

When should I write acceptance tests?

The best practice is to favour having lots of fast running unit tests and a few acceptance tests, but how do you decide when you should write an acceptance test, vs unit tests?

It's difficult to give a concrete rule, but the questions I typically ask myself are:

Is this an edge case? I'd prefer to unit test those

Is this something that the non-computer people talk about a lot? I would prefer to have a lot of confidence the key thing "really" works, so I'd add an acceptance test

Am I describing a user journey, rather than a specific function? Acceptance test

Would unit tests give me enough confidence? Sometimes you're taking an existing journey that already has an acceptance test, but you're adding other functionality to deal with different scenarios due to different inputs. In this case, adding another acceptance test adds a cost but brings little value, so I'd prefer some unit tests.

Iterating on our work

With all this effort, you'd hope extending our system will now be simple. Making a system that is simple to work on, is not necessarily easy, but it's worth the time, and is substantially easier to do when you start a project.

Let's extend our API to include a "curse" functionality.

Write the test first

This is brand-new behaviour, so we should start with an acceptance test. In our specification file, add the following

Pick one of our acceptance tests and try to use the specification

Try to run the test

Our Driver doesn't support Curse yet.

Write the minimal amount of code for the test to run and check the failing test output

Remember we're just trying to get the test to run, so add the method to Driver

If you try again, the test should compile, run, and fail

Write enough code to make it pass

We'll need to update our protocol buffer specification have a Curse method on it, and then regenerate our code.

You could argue that reusing the types GreetRequest and GreetReply is inappropriate coupling, but we can deal with that in the refactoring stage. As I keep stressing, we're just trying to get the test passing, so we verify the software works, then we can make it nice.

Re-generate our code with (inside adapters/grpcserver).

Update driver

Now the client code has been updated, we can now call Curse in our Driver

Update server

Finally, we need to add the Curse method to our Server

The tests should now pass.

Refactor

Try doing this yourself.

Extract the

Curse"domain logic", away from the grpc server, as we did forGreet. Use the specification as a unit test against your domain logicHave different types in the protobuf to ensure the message types for

GreetandCurseare decoupled.

Implementing Curse for the HTTP server

Curse for the HTTP serverAgain, an exercise for you, the reader. We have our domain-level specification and our domain-level logic neatly separated. If you've followed this chapter, this should be very straightforward.

Add the specification to the existing acceptance test for the HTTP server

Update your

DriverAdd the new endpoint to the server, and reuse the domain code to implement the functionality. You may wish to use

http.NewServeMuxto handle the routeing to the separate endpoints.

Remember to work in small steps, commit and run your tests frequently. If you get really stuck you can find my implementation on GitHub.

Enhance both systems by updating the domain logic with a unit test

As mentioned, not every change to a system should be driven via an acceptance test. Permutations of business rules and edge cases should be simple to drive via a unit test if you have separated concerns well.

Add a unit test to our Greet function to default the name to World if it is empty. You should see how simple this is, and then the business rules are reflected in both applications for "free".

Wrapping up

Building systems with a reasonable cost of change requires you to have ATs engineered to help you, not become a maintenance burden. They can be used as a means of guiding, or as a GOOS says, "growing" your software methodically.

Hopefully, with this example, you can see our application's predictable, structured workflow for driving change and how you could use it for your work.

You can imagine talking to a stakeholder who wants to extend the system you work on in some way. Capture it in a domain-centric, implementation-agnostic way in a specification, and use it as a north star towards your efforts. Riya and I describe leveraging BDD techniques like "Example Mapping" in our GopherconUK talk to help you understand the essential complexity more deeply and allow you to write more detailed and meaningful specifications.

Separating essential and accidental complexity concerns will make your work less ad-hoc and more structured and deliberate; this ensures the resiliency of your acceptance tests and helps them become less of a maintenance burden.

Dave Farley gives an excellent tip:

Imagine the least technical person that you can think of, who understands the problem-domain, reading your Acceptance Tests. The tests should make sense to that person.

Specifications should then double up as documentation. They should specify clearly how a system should behave. This idea is the principle around tools like Cucumber, which offers you a DSL for capturing behaviours as code, and then you convert that DSL into system calls, just like we did here.

What has been covered

Writing abstract specifications allows you to express the essential complexity of the problem you're solving and remove accidental complexity. This will enable you to reuse the specifications in different contexts.

How to use Testcontainers to manage the life-cycle of your system for ATs. This allows you to thoroughly test the image you intend to ship on your computer, giving you fast feedback and confidence.

A brief intro into containerising your application with Docker

gRPC

Rather than chasing canned folder structures, you can use your development approach to naturally drive out the structure of your application, based on your own needs

Further material

In this example, our "DSL" is not much of a DSL; we just used interfaces to decouple our specification from the real world and allow us to express domain logic cleanly. As your system grows, this level of abstraction might become clumsy and unclear. Read into the "Screenplay Pattern" if you want to find more ideas as to how to structure your specifications.

For emphasis, Growing Object-Oriented Software, Guided by Tests, is a classic. It demonstrates applying this "London style", "top-down" approach to writing software. Anyone who has enjoyed Learn Go with Tests should get much value from reading GOOS.

In the example code repository, there's more code and ideas I haven't written about here, such as multi-stage docker build, you may wish to check this out.

In particular, for fun, I made a third program, a website with some HTML forms to

GreetandCurse. TheDriverleverages the excellent-looking https://github.com/go-rod/rod module, which allows it to work with the website with a browser, just like a user would. Looking at the git history, you can see how I started not using any templating tools "just to make it work" Then, once I passed my acceptance test, I had the freedom to do so without fear of breaking things. -->

Last updated